이야기 | Aider + Claude 3.5 Sonnet Works Really well With Elixir

페이지 정보

작성자 Gayle 작성일25-03-04 15:20 조회124회 댓글0건본문

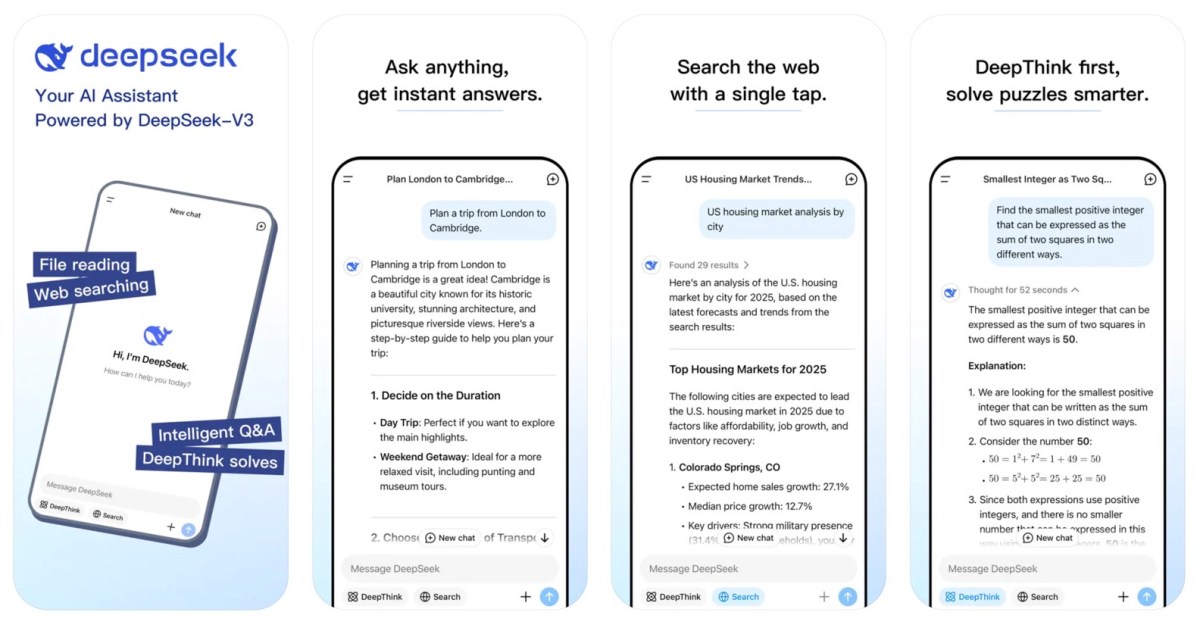

Founded in 2023 by Liang Wenfeng, headquartered in Hangzhou, Zhejiang, DeepSeek is backed by the hedge fund High-Flyer. Founded in 2025, we assist you to grasp DeepSeek tools, discover concepts, and enhance your AI workflow. A guidelines-based mostly reward system, described in the model’s white paper, was designed to help DeepSeek-R1-Zero learn to reason. In order for you assist with math and reasoning tasks resembling debugging and code writing, you may choose the DeepSeek R1 model. The model is optimized for writing, instruction-following, and coding tasks, introducing perform calling capabilities for external software interaction. Breakthrough in open-source AI: DeepSeek, a Chinese AI firm, has launched DeepSeek-V2.5, a strong new open-source language model that combines general language processing and superior coding capabilities. The model’s combination of normal language processing and coding capabilities sets a new commonplace for open-supply LLMs. JSON context-Free DeepSeek Ai Chat grammar: this setting takes a CFG that specifies normal JSON grammar adopted from ECMA-404. The licensing restrictions mirror a rising consciousness of the potential misuse of AI applied sciences. The open-source nature of DeepSeek-V2.5 could speed up innovation and democratize access to advanced AI applied sciences. DeepSeek-V2.5 was released on September 6, 2024, and is offered on Hugging Face with each net and API access.

Founded in 2023 by Liang Wenfeng, headquartered in Hangzhou, Zhejiang, DeepSeek is backed by the hedge fund High-Flyer. Founded in 2025, we assist you to grasp DeepSeek tools, discover concepts, and enhance your AI workflow. A guidelines-based mostly reward system, described in the model’s white paper, was designed to help DeepSeek-R1-Zero learn to reason. In order for you assist with math and reasoning tasks resembling debugging and code writing, you may choose the DeepSeek R1 model. The model is optimized for writing, instruction-following, and coding tasks, introducing perform calling capabilities for external software interaction. Breakthrough in open-source AI: DeepSeek, a Chinese AI firm, has launched DeepSeek-V2.5, a strong new open-source language model that combines general language processing and superior coding capabilities. The model’s combination of normal language processing and coding capabilities sets a new commonplace for open-supply LLMs. JSON context-Free DeepSeek Ai Chat grammar: this setting takes a CFG that specifies normal JSON grammar adopted from ECMA-404. The licensing restrictions mirror a rising consciousness of the potential misuse of AI applied sciences. The open-source nature of DeepSeek-V2.5 could speed up innovation and democratize access to advanced AI applied sciences. DeepSeek-V2.5 was released on September 6, 2024, and is offered on Hugging Face with each net and API access.

Use Deepseek open source mannequin to rapidly create skilled internet applications. The accessibility of such superior models could lead to new purposes and use instances throughout numerous industries. By open-sourcing its models, code, and knowledge, DeepSeek LLM hopes to promote widespread AI analysis and industrial functions. Absolutely outrageous, and an unbelievable case examine by the analysis crew. The case examine revealed that GPT-4, when provided with instrument photographs and pilot directions, can effectively retrieve fast-access references for flight operations. You probably have registered for an account, you might also entry, evaluation, and replace certain private info that you've got offered to us by logging into your account and utilizing available options and functionalities. We use your information to function, provide, develop, and improve the Services, including for the next purposes. Later in this version we have a look at 200 use instances for publish-2020 AI. AI Models with the ability to generate code unlocks all types of use circumstances.

’ fields about their use of massive language models. Day 4: Optimized Parallelism Strategies - Likely focused on enhancing computational efficiency and scalability for giant-scale AI models. The mannequin is optimized for both massive-scale inference and small-batch native deployment, enhancing its versatility. DeepSeek-V2.5 utilizes Multi-Head Latent Attention (MLA) to reduce KV cache and improve inference velocity. The LLM was trained on a large dataset of 2 trillion tokens in each English and Chinese, using architectures equivalent to LLaMA and Grouped-Query Attention. Note: this model is bilingis itself an essential takeaway: we have a state of affairs where AI models are educating AI fashions, and the place AI models are instructing themselves. The idea has been that, in the AI gold rush, buying Nvidia inventory was investing in the company that was making the shovels.

If you liked this post and you would such as to get even more info relating to Deep seek kindly see the internet site.

댓글목록

등록된 댓글이 없습니다.